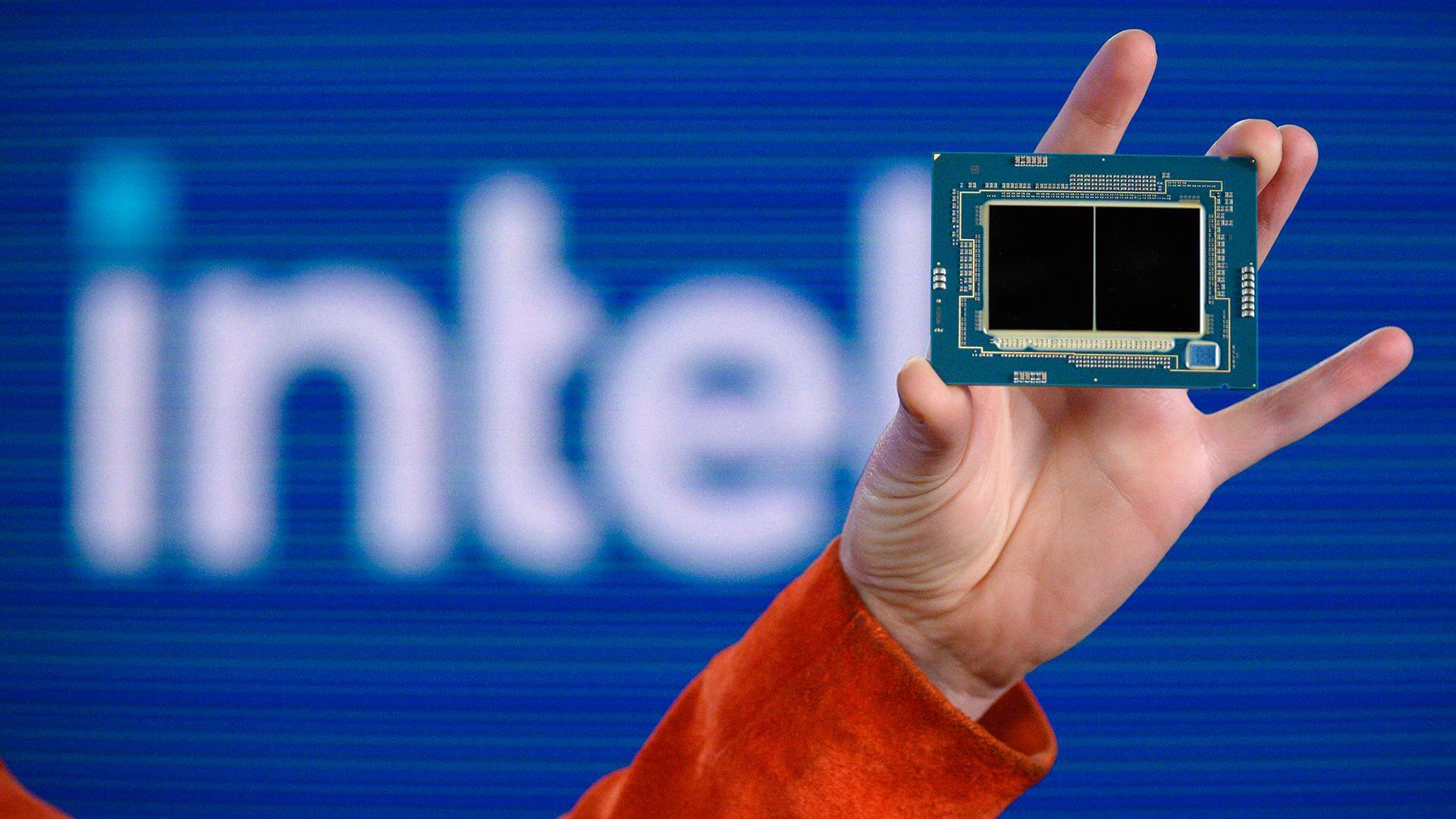

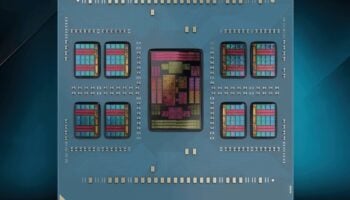

Intel is prepping its next-gen Xeon Scalable processors for a late 2023 release. Emerald Rapids-SP will be socket-compatible with Sapphire Rapids-SP and leverage the same process node (Intel 7) with one key difference. While the latter comprised four 15-core chiplets, its successor will leverage only two (larger) dies with 32 cores each (functional). Two will be disabled to improve yields, bringing the max core count to 64 on Emerald Rapids.

Intel used the space freed up by this approach to cram nearly thrice as much SRAM (L3 cache) onto the next-gen Xeons. Of course, this also meant increasing the density of the compute die by quite a bit. Each Emerald Rapids core has access to 5MB of L3 cache, up from just 1.87MB on Sapphire Rapids.

Sapphire Rapids came with 105MB of L3 cache on its fastest models. Emerald Rapids-SP will feature up to 320MB of L3 cache, even higher than its AMD Epyc Genoa rivals. Sapphire Rapids-SP was limited to 105W, and the 64-core Epyc chips pack 256MB of LLC.

Regardless of the above, Emerald Rapids will be more expensive for Intel to manufacture, leading to slimmer profit margins and a lower operating income for the Data Center Group.

Source: SemiAnalysis.