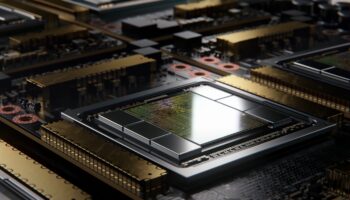

The graphics card market is all set to get a third player with Intel’s Xe-HPG “Alchemist” graphics cards landing in the first quarter of 2022. One of the primary driving factors of modern game graphics is advanced upscaling technologies such as NVIDIA’s DLSS. Intel is aiming to win this segment with its XeSS upscaling technology, essentially a DLSS clone, but with more flexibility and an open-source framework.

We want the benefits of XᵉSS to be available to a broad audience, so we developed an additional version based on the DP4a instruction, which is supported by competing GPUs and Intel Xᵉ LP-based integrated and discrete graphics. Our XᵉSS APIs are designed to integrate into today’s game engines, and we’re committed to working with the development community. The SDK for the initial version of XᵉSS based on XMX will be available to ISVs starting this month, while the DP4a version is due later this year. We believe in open-source standards and will open up the tools and SDKs for XᵉSS as they mature.

Medium

However, similar to DLSS, XeSS needs to be implemented on an engine-level with a fair bit of work from the devs to avoid the pitfalls of temporal upsampling and AI-based upscaling techniques such as ghosting, shimmering, hallucinations, etc. This means that adoption will take a while and likely lag behind DLSS for the time being. NVIDIA has already worked with over several dozen developers (both indie and AAA) to enable its tech in over 60 titles.

Intel is planning to make up for its late entry into the gaming segment by making XeSS open-source. It’ll be supported on competitor platforms (NVIDIA/AMD) as well, via DP4a or INT8 mixed-precision compute. DP4a works on Gen12 Xe and the latest NVIDIA and AMD GPUs, and as such, they should all support XeSS in some capacity. The best implementation of XeSS will be limited (via the XMX Tensor cores) to the Alchemist lineup. Going by the official slides, the advantage of XMX over DP4a is minimal, but it’s important to take 1st party info with a grain of salt.

Many gamers are also creators, so we’re developing robust capture capabilities that leverage our powerful encoding hardware. These include a virtual camera with AI assist and recorded game highlights that save your best moments. We’re even integrating overclocking controls into the driver UI to give enthusiasts the tools they need to push the hardware to the limit.

Finally, Intel is also planning to integrate a manual overclocking tool in its graphics control panel, leaving NVIDIA as the only vendor without in-built overclocking capabilities in vendor software. That’s a trivial matter though and should change soon. The hardware side of Intel’s graphics journey looks solid. Now, it’ll be up to the driver team to come through, or it’ll be a really bumpy ride!