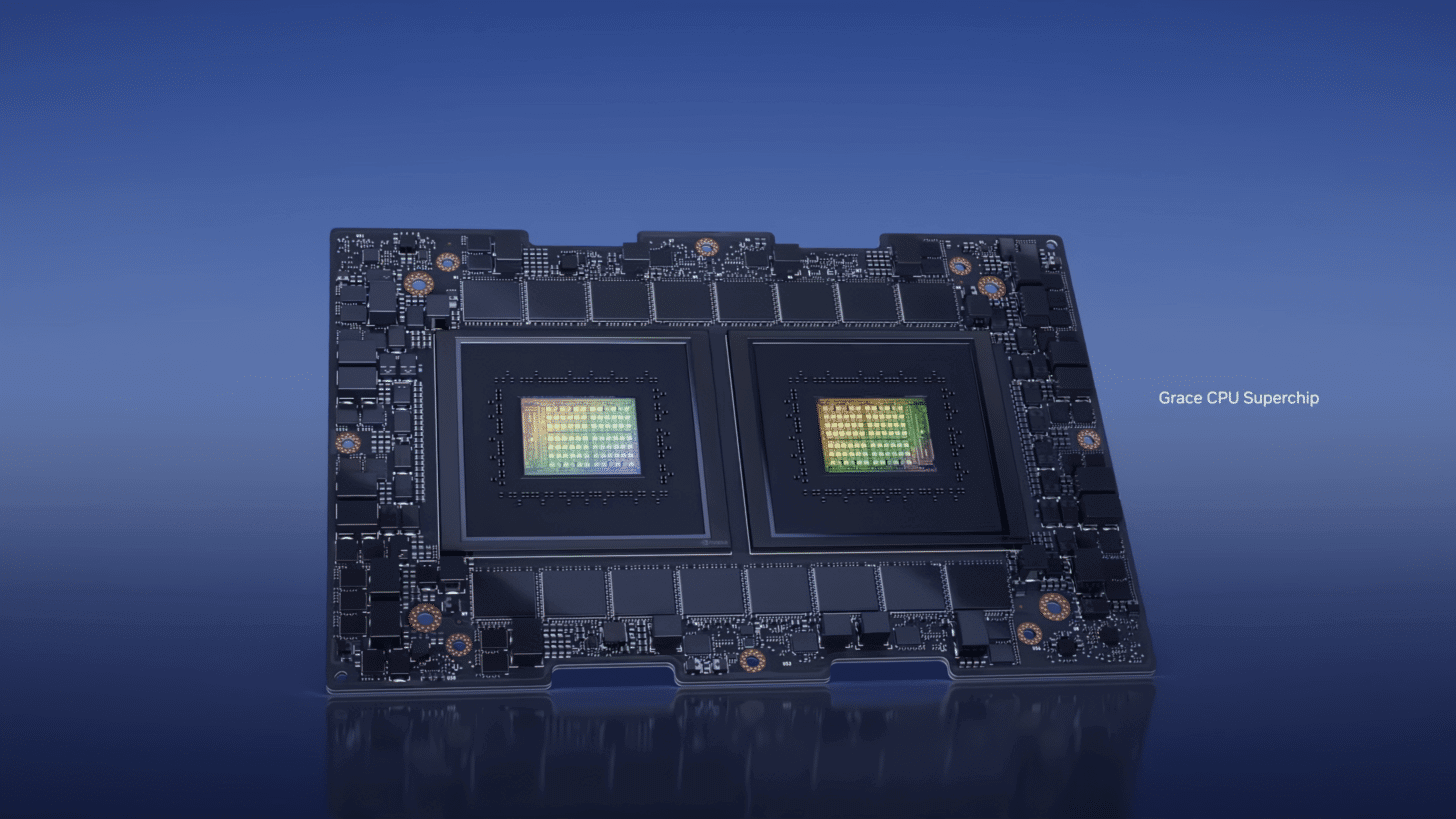

NVIDIA has announced the sampling of its Grace CPU, promising twice as much compute throughput as its Intel/AMD rivals at notably lower power consumption. Aimed at HPC, Big Data, and inferencing workloads, these Arm designs will go up against the latest Epyc and Xeon data center processors. The Grace Superchip is NVIDIA’s first CPU design with a paired modular design (not chiplet, two monolithic dies per node).

The Grace Superchip features two Grace CPUs, each with 72 cores (144 overall) alongside 117MB of L3 cache per die or 234MB overall. It supports unified memory architecture with shared page tables. The scalable coherency fabric has a distributed cache design with a bi-section bandwidth of 3.225 TB/s

Instead of two Grace CPUs, one Grace CPU and one Hopper GPU can be paired with heterogenous coherency. The two chips share the same virtual address space, with the GPU accessing the pageable memory.

In addition, a Grace CPU on a Superchip can also be interconnected to a Hopper GPU through an NVSwitch on another and access its VRAM at native NVLINK speeds.

For memory, NVIDIA has decided to go with LPDDR5X memory (up to 960GB) with 32 channels, delivering up to 1TB/s of memory bandwidth. LPDDR5X memory provides 53% more bandwidth than DDR5 at one-eighth the power and at a similar cost. HBM2e was a viable alternative but at a >3x price premium.

The Grace Superchip comes with 68 PCIe Gen 5 lanes. Four of these can be used for x15 links with a bandwidth of 128GB/s, while the rest are meant for MISC. The 144-core Superchip has a TDP of 500W.

| Core architecture | Neoverse V2 Cores: Armv9 with 4x128b SVE2 |

| Core count | 144 |

| Cache | L1: 64 KB I-cache + 64 KB D-cache per core L2: 1 MB per core L3: 234 MB per superchip |

| Memory technology | LPDDR5X with ECC, co-packaged |

| Raw memory BW | Up to 1 TB/s |

| Memory size | Up to 960 GB |

| FP64 peak | 7.1 TFLOPS |

| PCI Express | 8x PCIe Gen 5 x16 interfaces; option to bifurcate Total 1 TB/s PCIe bandwidth. Additional low-speed PCIe connectivity for management. |

| Power | 500 W TDP with memory, 12 V supply |