At the Annual Supercomputing event 2021, Intel shared some interesting details regarding its Ponte Vecchio HPC graphics card (accelerator). The graphics core, memory, cache subsystem, I/O, and the process nodes powering the next-gen HPC GPU were detailed. In addition to this, the Sapphire Rapids-SP lineup was also touched upon. The differences between the standard and HBM variants were highlighted, with a look at how SPR and PV will soon power the fastest supercomputer ever designed.

Starting with Ponte Vecchio, each GPU has 128 Xe cores or Compute units for a total of 1,024 vector units per GPU. These are paired with 1,024 matrix engines, 128 ray-tracing units, 64MB of L1 cache, and a massive 408MB of L2 cache. In terms of memory, the GPU is paired with 128GB of HBM2e memory (across 8 stacks?). Each GPU is connected to seven others via the Xe Link, a High-Speed Coherent Unified Fabric (Intel’s Infinity Fabric). There’s support for PCIe Gen 5, and the various compute, memory and cache tiles are connected using Foveros 3D stacking and the EMIB high-speed interconnect. Finally, there’s the matter of the process nodes. Some of the tiles (most likely the compute tiles) are fabbed on Intel’s 7nm node while the rest will be fabbed using TSMC’s 5nm (N5) and 7nm (N7) process nodes. It’s worth noting that the former is comparable to N7 while being a fair inferior to N5.

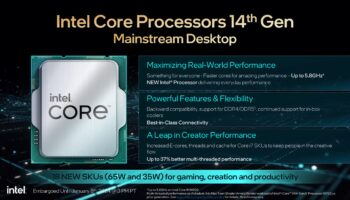

On the CPU side, we have the 4th Gen Xeon Scalable Sapphire Rapids processor. With a total core count of up to 56 and a quad-chiplet design, it’ll also take advantage of on-die HBM2e memory, connected to the I/O and cores using EMIB. The HBM variants of SPR will pack up to 64GB of on-die HBM2e memory. These will be distributed across four 8-Hi stacks of 16GB each, with an overall bandwidth of up to 1.640 TB/s. Each SPR CPU will be paired with four Ponte Vecchio compute tiles in the Aurora Supercomputer using EMIB.

Sapphire Rapids-SP can be run in three modes. The first is the HBM-only which uses only the on-die HBM memory as the main system memory, ignoring the available DDR5 memory. This is ideal for workloads requiring less or equal to 64GB of physical memory.

The second is Cache-mode. Here, the HBM memory is used as a cache for the DDR5 system memory (LLC). It’s not visible to the software, and as such, doesn’t require any additional programming.

The final is the Flat-mode or standard mode. It pairs the DDR5 and HBM memory into a contiguous addressable memory space. The system will likely fill the HBM memory first, and if the requirements cross the 64GB, mark, then the additional applications are offloaded to the system memory.

The Sapphire Rapids-SP CPUs and Ponte Vecchio accelerator will together power the Aurora Supercomputer. More than 18,000 Xeon processors will be paired with 54,000+ Ponte Vecchio GPUs. Let’s have a look at Intel, AMD, and Intel’s accelerators side by side and analyze how they stack up compared to one another:

| Intel Ponte Vecchio | AMD MI250X | NVIDIA A100 80GB | |

|---|---|---|---|

| Compute Units | 128 | 55 x2 | 108 |

| Matrix Cores | 128 | 2 x 440 | 432 |

| INT8 Tensor | ? | 383 TOPs | 624 TOPs |

| FP16 Matrix | ? | 383 TOPs | 312 TOPs |

| FP64 Vector | ? | 47.9 TFLOPS | 9.5 TFLOPS |

| FP64 Matrix | ? | 95.7 TFLOPs | 19.5 TFLOPS |

| L2/L3 | 2 x 204 MB | 2 x 8 MB | 40 MB |

| VRAM Capacity | 128 GB | 128 GB | 80 GB |

| VRAM Type | 8 x HBM2e | 8 x HBM2e | 5 x HBM2e |

| Bus Width | ? | 8192-bit | 5120-bit |

| Bandwidth | ? | 3.2 TB/s | 2.0 TB/s |

| Process Node | Intel 7 TSMC N7 TSMC N5 | TSMC N6 | TSMC N7 |

| Release Date | 2022 | 11/2021 | 11/2020 |

What stands out almost immediately is the amount of L2 cache leveraged by Ponte Vecchio: 408MB vs just 16MB on the Instinct MI200 and 40MB on the A100. However, in terms of raw compute, AMD has a lot more vector units: 7,040 across 110 CUs, resulting in an overall throughput of 95.7 TFLOPs, compared to just 19.5 TFLOPs on the NVIDIA A100. However, each of Intel’s CUs will be better fed with much higher cache hit rates and wider XMX matrix units. The MI250X has an 8192-bit wide bus paired with 128GB of HBM2e memory capable of transfer rates of up to 3.2TB/s. Intel hasn’t shared any details regarding the bus width or memory configuration of PVC just yet.