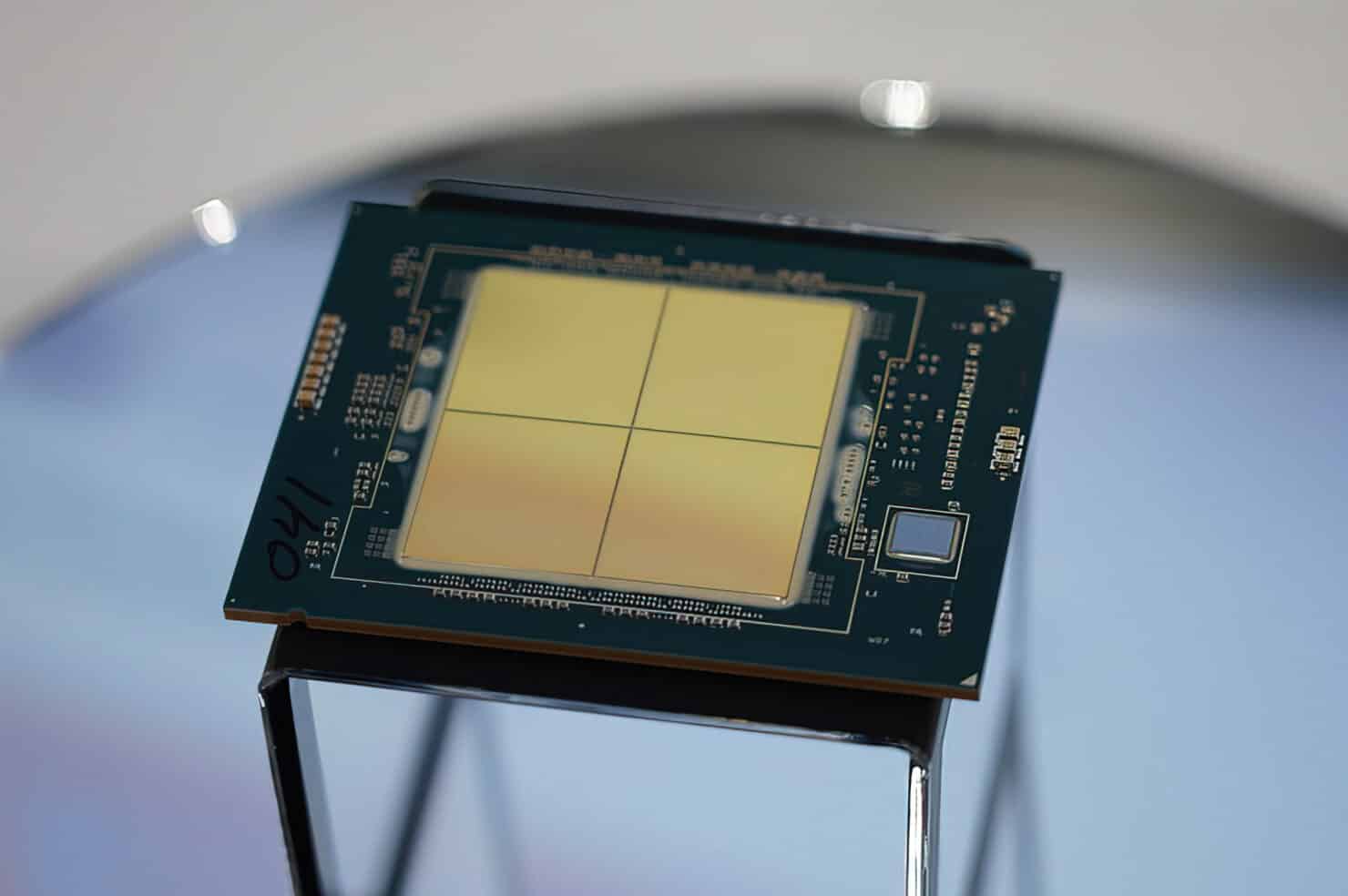

Intel has shared further details of its 4th Gen Xeon Scalable processors featuring on-die HBM (select SKUs) for improved HPC performance. Known as Sapphire Rapids HBM, these CPUs will feature up to 64GB of on-chip HBM2e memory to accelerate bandwidth-heavy HPC and AI workloads. The HBM stacks will be placed on the two opposite ends of the substrate, one next to each of the compute tiles (chiplets).

They will be connected to the compute dies using Intel’s cutting-edge EMIB packaging and interconnect technology. Each array on the standard variants will have a total of 10 EMIB interconnects with a pitch size of 55u and a core pitch of 100u. The HBM part, on the other hand, will have 14 interconnects to pair the 8-Hi memory stacks with the primary chiplets. The overall package size is a massive 5700mm2, roughly 28% larger than AMD’s upcoming Zen 4-based Epyc Genoa processors.

Intel is pitching Sapphire Rapids HBM as a high-performance HPC part, offering over 3x more performance than the preceding Ice Lake-SP family. Most of these gains will come from bandwidth-hungry applications such as system modeling, physics, and energy. In more mainstream workloads, the increase will be less pronounced.

Backed by AVX-512 and AMX, Sapphire Rapids-SP will definitely be an appealing portfolio for select customers but the increased price and power consumption will also make many think twice before committing.

The 4th Gen Xeon Scalable processors are expected to offer up to 56 cores and 112 threads fabbed on the Intel 7 process. The Golden Cove core will push the single-threaded performance up by 20%. Add 105 MB of L3 cache, octa-channel DDR5-4800 memory, and 80 PCIe Gen 5 lanes, and you’ve got a formidable server process (with a formidable TDP of over 400W).