With Intel’s 12th Gen Alder Lake-S processors slated to launch by the end of the year, the next generation of DRAM is on the horizon. DDR5 or Double Data Rate v5 modules should start hitting the retail market by the holiday season with speeds of up to 2,400MHz or 4,800Mbps. In comparison, DDR4 has a stock speed of up to 1,600MHz or 3,200Mbps as per JEDEC specifications.

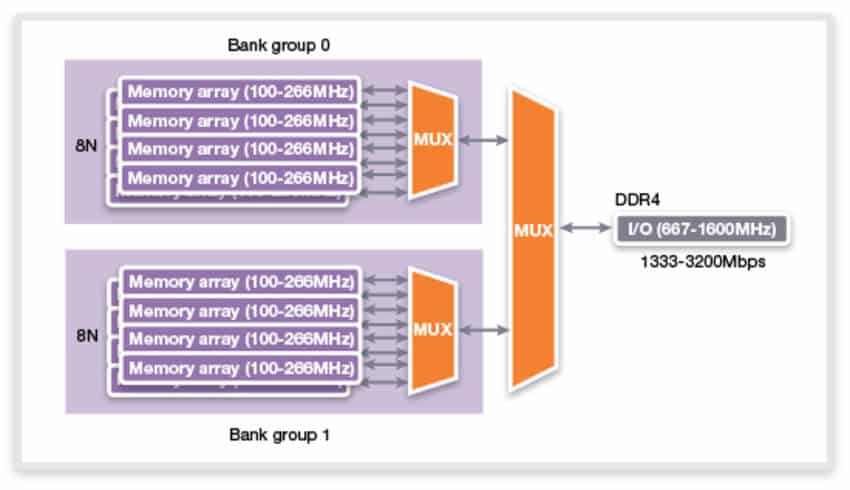

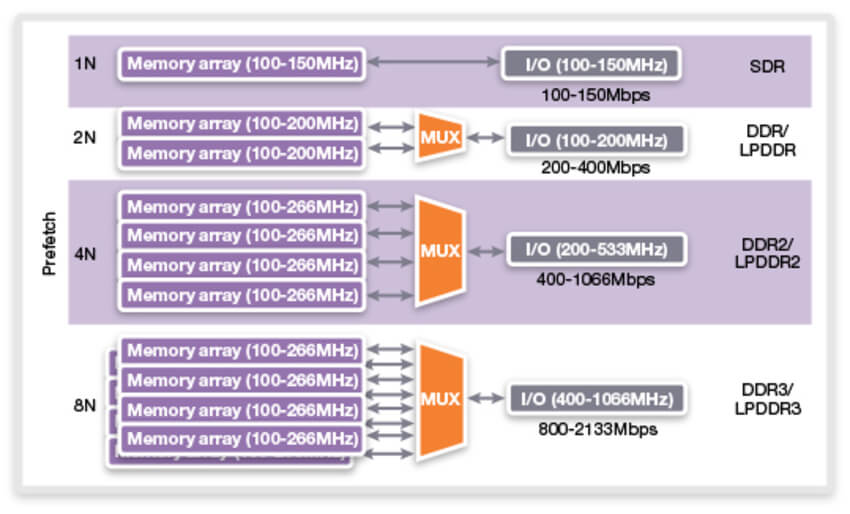

There are many features that facilitate this significant increase in the operation frequency, with the primary ones being the increase in BL and prefetch to 16, doubling of the memory banks to 32 (2 or four per BG) while also supporting the older 16 bank structure, fine-grained refreshes (refresh on a per bank basis) and dual-channel DIMMs:

Burst Length: DDR4 has a burst rate of 8, the same as DDR3 allowing transfers of up to 16B from the cache at a time. DDR5 increases this to 16, with support for 32-length mode, which allows up to 64-byte cache line fetch with just one DIMM.

To understand what burst length means, you need to know how memory is accessed. When the CPU or cache requests new data, the address is sent to the memory module and then the target memory bank containing the required row, after which the column is located (if not present, a new row is loaded). Keep in mind that there’s a delay after every step.

Then the entire column is sent across the memory bus, but instead in bursts. For DDR4, each burst was 8 (or 16B). With DDR5, it has been increased to 16 with further scope up to 32 (64B). There are two bursts per clock and they happen at the effective data rate.

Burst Length

16n Prefetch: The prefetch on DDR5 has also been scaled up (from 8n on DDR4) to 16n to keep up with the increased burst length. Like DDR4, there will be two memory-bank arrays per channel connected via a MUX resulting in a higher effective prefetch rate (see above image).

Twice as many Bank Groups: DDR5 increases the total number of memory banks to 32 across 8 bank groups of 4 banks. This in turn increases the bandwidth and performance as more banks are accessible to the memory controller simultaneously, similar to how dual-rank improves upon single-rank.

Fine-grained bank refreshes: With DDR4, when the memory cells are being refreshed, it can’t issue further instructions to any of the banks as all are refreshed at the same time. DDR5 allows Same Bank Refresh which improves the effective bandwidth by allowing some banks to refresh while the rest are still in use.

Dual-channel DIMMs: Similar to its mobile counterpart, DDR5 will feature two 32-bit memory channels per DIMM (versus a single 64-bit on DDR4). This means we’ll start seeing quad-channel configurations with two DIMMs. The two channels per DIMM are independent and can issue commands separately. Since the burst length and prefetch are twice as much as DDR4, this will improve the overall bandwidth by increasing the overall number of data transfers per DIMMs.

Integrated voltage regulation: DDR5 reduces both the VDD and VPP voltages from 1.2v to 1.1v, reducing the power consumption. The DRAM voltage regulator has also been moved from the motherboard to the memory modules, reducing circuit complexity for the former.

DDR5 also increases the memory density all the way (up) to 64Gb from 16Gb while also pushing the operating clocks as high as 4200MHz (or 8400MT/s). By adopting a Decision Feedback Equalization (DFE) circuit, which eliminates reflective noise during the channels’ high-speed operation, DDR5 increases the speed per pin considerably.

On-die ECC: The presence of on-die ECC on DDR5 memory has been the subject of many discussions and a lot of confusion among consumers and the press alike. Unlike standard ECC, on-die ECC primarily aims to improve yields at advanced process nodes, thereby allowing for cheaper DRAM chips. On-die ECC only detects errors if they take place within a cell or row during refreshes. When the data is moved from the cell to the cache or the CPU, if there’s a bit-flip or data corruption, it won’t be corrected by on-die ECC. Standard ECC corrects data corruption within the cell and as it is moved to another device or an ECC-supported SoC. (Thanks to Ian Cuttress for his explanation)